I apologize in advance for using too many superlatives in this article. I might be excused simply because what’s happening in the field of artificial intelligence is truly mindboggling and unprecedented.

It’s not so much what AI will be able to do. This is the hard edge of a technology that those most intimately involved in creating the hardware that will drive it say is just the beginning.

“Nvidia’s chips are improving at such a staggering pace that it defies any historical comparison,” reports Axios.

This isn’t corporate boosterism. That improvement has led to an increase in its annual revenue from $27 billion in 2022 to $216 billion last year — “a growth rate that has translated into a $4.5 trillion market value,” says Axios. The market value is expected to increase to $6 trillion by the end of the year.

Nvidia just introduced its “Blackwell” chip, which is expected to produce a $1 trillion in backlog orders by the end of 2026.

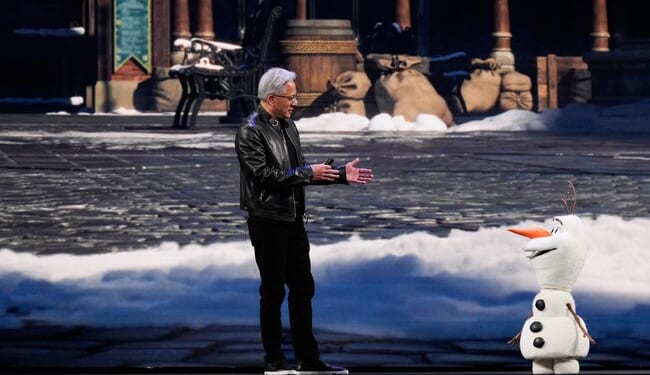

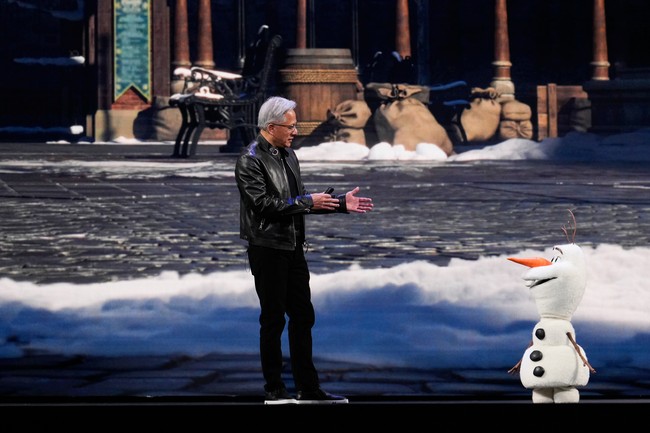

The company’s CEO, Jensen Huang, said at a tech conference in San Jose, “We reinvented computing, just like the PC (personal computer) revolution and the internet revolution,” Huang proclaimed. “We are now at the beginning of a new platform change.”

Blackwell has generated so much excitement because it delivers superior performance to other chips while being far more energy-efficient. And with AI data centers pulling extraordinary amounts of power from local power grids, this is absolutely critical to maintaining AI’s momentum.

The new chips are what’s known as an inference processor. “Once an AI tool is trained, inference chips enable the technology to take what it has learned and produce responses — whether it be writing a document or creating an image — more efficiently than the processors that were used while the large language models were being built,” reports the Associated Press.

“The inference inflection has arrived,” Huang said.

The game is on with tech giants fighting for position as the AI playing field has once again changed dramatically. “Nvidia isn’t going to cede any market share to Google or Meta,” said Wedbush Securities analyst Dan Ives.

Nvidia has leveraged its dominant position in the AI chip market so far to increase its annual revenue from $27 billion in 2022 to $216 billion last year — a growth rate that has translated into a $4.5 trillion market value for the Santa Clara, California, company.

But Nvidia’s once-torrid stock has cooled since the company briefly became the first to surpass a $5 trillion market value last October amid worries that the the AI buzz is overblown.

“This is just a white-knuckle period for the technology industry,” said Wedbush Securities analyst Dan Ives.

Even after Nvidia released a quarterly report in late February that far exceeded analyst forecasts and management provided a rosy outlook, the company’s stock price is still down by 6% from where it stood before those numbers came out. After Huang’s disclosure about an anticipated doubling in backlogged chip orders, Nvidia’s shares edged up by nearly 2% to close Monday at $183.22.

“Chips are being redesigned because efficiency determines how fast intelligence can scale,” Huang wrote in a rare blog post published last week. “Energy becomes central because it sets the ceiling on how much intelligence can be produced at all.”

Chips that use energy more efficiently will win out, as ridiculous AI power demands will soon make consumers howl, forcing Congress to act. If this were the 1960s, we’d speedily build more power plants, including coal and oil, but the permitting process today makes it an impossible task to keep pace with AI energy needs.

Energy efficiency is no longer a nice-to-have, it’s a must-have.

Electricity is physically limited. AI demand appears unlimited. That makes energy efficiency the backbone of society’s astonishing growth in AI computing power.

It’s like going from a Model T to a Tesla in under a decade — instead of more than a century.

If cars’ fuel efficiency had improved as swiftly as chips, “we’d be driving to the moon and back in one gallon of gas,” said Josh Parker, head of sustainability at Nvidia, the world’s leader in AI computation.

No matter how efficient AI chips get at using energy, the Jevons Paradox comes into play. The Jevons Paradox is the phenomenon in which energy efficiency often creates more demand for energy rather than leading to energy savings.

In the end, even with inference chips, AI will be stuck with the same limiting factors of too much power and not enough power generated.

PJ Media will give you all the information you need to understand the decisions that will be made this year. Insightful commentary and straight-on, no-BS news reporting have been our hallmarks since 2005.

Get 60% off your new VIP membership by using the code FIGHT. You won’t regret it.